As part of the Agents & Robotics HackXelerator, April 2026.

When I first coined the term DevEthicsOps back in 2022 at the DevOps Conference, it was just a theoretical concept: the idea that ethical considerations shouldn’t be an audit at the end of an engineering project, but a continuous practice embedded in how we build software—much like security or reliability.

Since then, much has changed, particularly with the rise of artificial intelligence and autonomous agents. The agent policy pipeline I built for the Agents & Robotics Hackathon is an opportunity to demonstrate what DevEthicsOps might look like in practice, using multi-agent simulation, MCP servers, CI/CD pipelines, and LLM observability.

The experiment: a job market

I needed a concrete example. I chose to model a future job market shaped by AI—one in which there aren’t enough jobs for everyone.

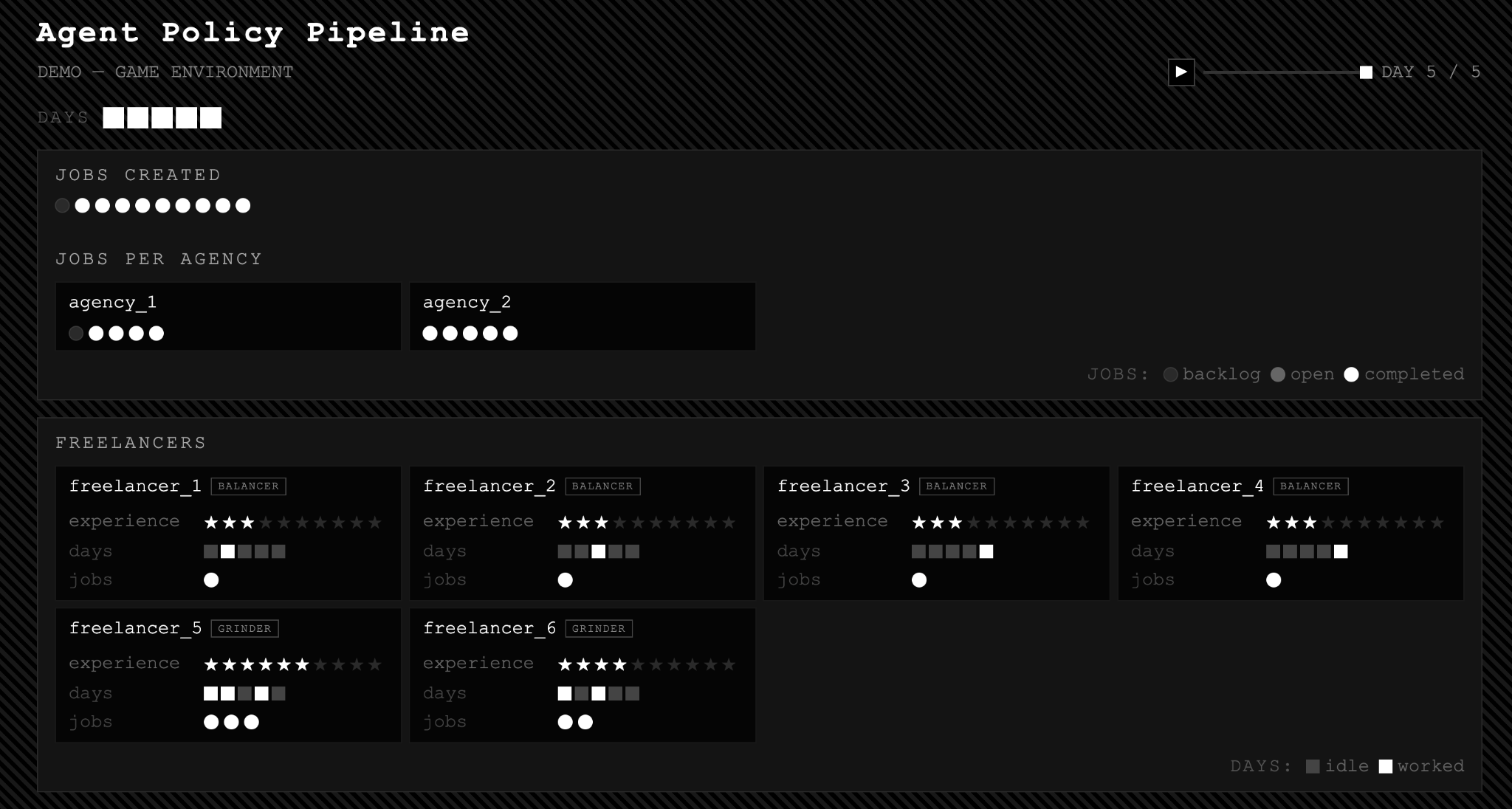

This is an ethically charged scenario by design, where the challenge is not simply to optimize outcomes for a few, but to avoid sexclusion. I built a classical simulation in which agencies match freelancers to jobs. Freelancers have different goals: some are grinders, seeking to maximize work and skill acquisition; others are balancers, preferring a sustainable pace.

Without policy guidance, the agencies behave predictably: they optimize for the best match. Grinders—always available, always eager—receive most of the work, while balancers go idle.

But what if the goal isn’t just to simulate, but to build AI agencies capable of replacing real-world ones, guided by ethically informed policies?

The prototype

The agent policy pipeline acts as a policy factory for human × machine systems:

- It is fully configured in human-readable files (extending the CrewAI multi-agent format).

- It uses observer and reviewer agents responsible not only for system health (similar in scope to classical LLM observability), but also for ethical health—tracking satisfaction and understanding across all agents.

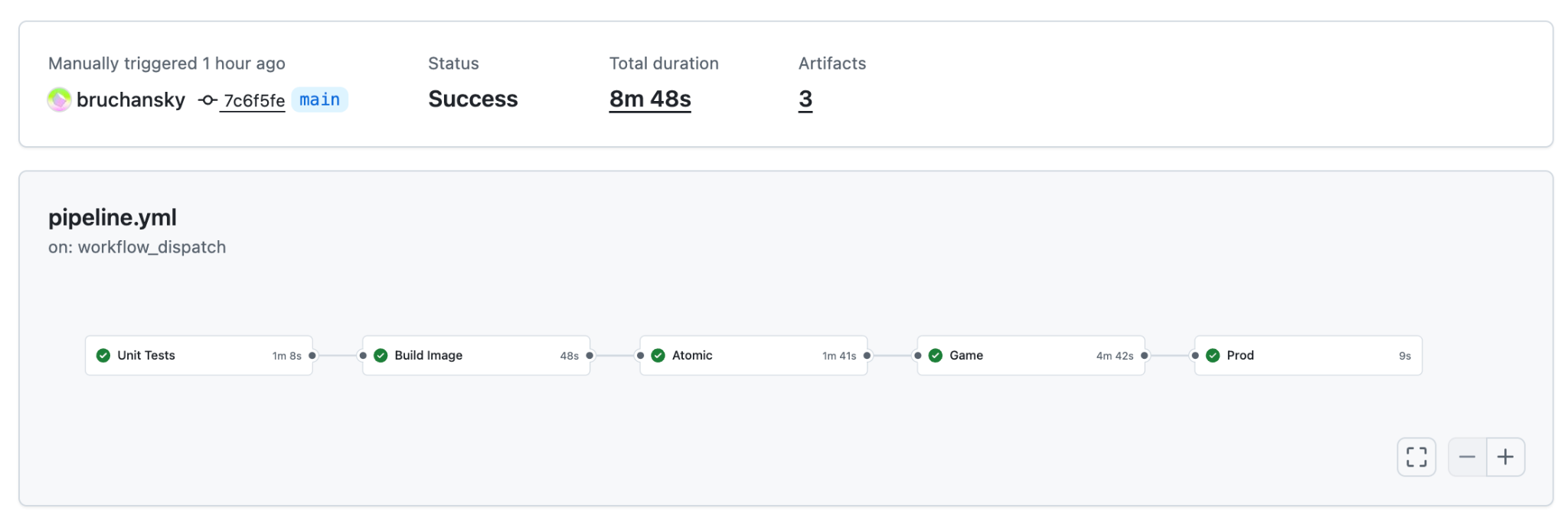

- It runs through a configurable set of environments to simulate practical and ethical scenarios, similar to a CI/CD pipeline (via GitHub Actions), but designed for agents.

- Its output is an AI agent governed by a tested policy, and a Game Master—a single source of truth (MCP server on Vultr)—resulting in a complete, deployable system.

Policy as prompt

A core feature is treating behavioral policy as a first-class engineering artifact.

In most AI deployments, policies are buried inside system prompts. Here, policy lives in a YAML file alongside agent identity and goals.

You can run the same experiment with different policies, compare outputs, and observe what changes. Policy becomes something you can reason about concretely—diff it, test it, and revert it.

Embedded ethics

In DevEthicsOps, I argued for a “Definition of Right” alongside a “Definition of Done”—criteria for whether a system works ethically, not just technically. In this project, that idea takes shape through observer and reviewer agents embedded directly in the pipeline.

The observer runs at every iteration. It checks data integrity, flags anomalies, and can halt the experiment if needed. The reviewer runs afterward, producing a written analysis: Did freelancers feel satisfied? Did they understand the rationale of the system they were in? Or did they experience cognitive dissonance?

These are not monitoring tools bolted on afterward. They are integral to the pipeline and cover dimensions far beyond traditional technical or business KPIs. Their outputs are versioned alongside everything else.

Auditable simulations

Each GitHub Actions run produces a set of artifacts:

- An event store — an append-only SQLite log capturing every agent action, iteration, and state transition. Fully queryable.

- An animated visualization — an HTML report showing job flows, skill progression, freelancer utilization, and observer insights over time. You can see exactly what happened, when, and which agent was responsible.

- Reviewer reports — written analyses from reviewer agents, embedded directly in the visualization.

Together, these make ethical analysis tractable.

What gets deployed

- Autonomous agents — each defined by role, goal, and behavioral policy in YAML. They act on the world through tool calls.

- A Game Master — an MCP server exposing the platform API. In simulation, it is SQLite-backed; in production, it wraps a real backend. The agent code remains unchanged.

The vision

This YAML-driven approach opens the door for AI tools (such as Claude Code, for instance) to iterate over environments, agents, and policies in an automated or semi-automated way.

In the job market scenario, could agents designed this way reshape—or even replace—parts of existing businesses, guided by policies that are far more flexible and robust than traditional regulation?

And more fundamentally: which governs human collaboration better—the new AI agents we design, or the much older artificial constructs we call organizations and corporations?